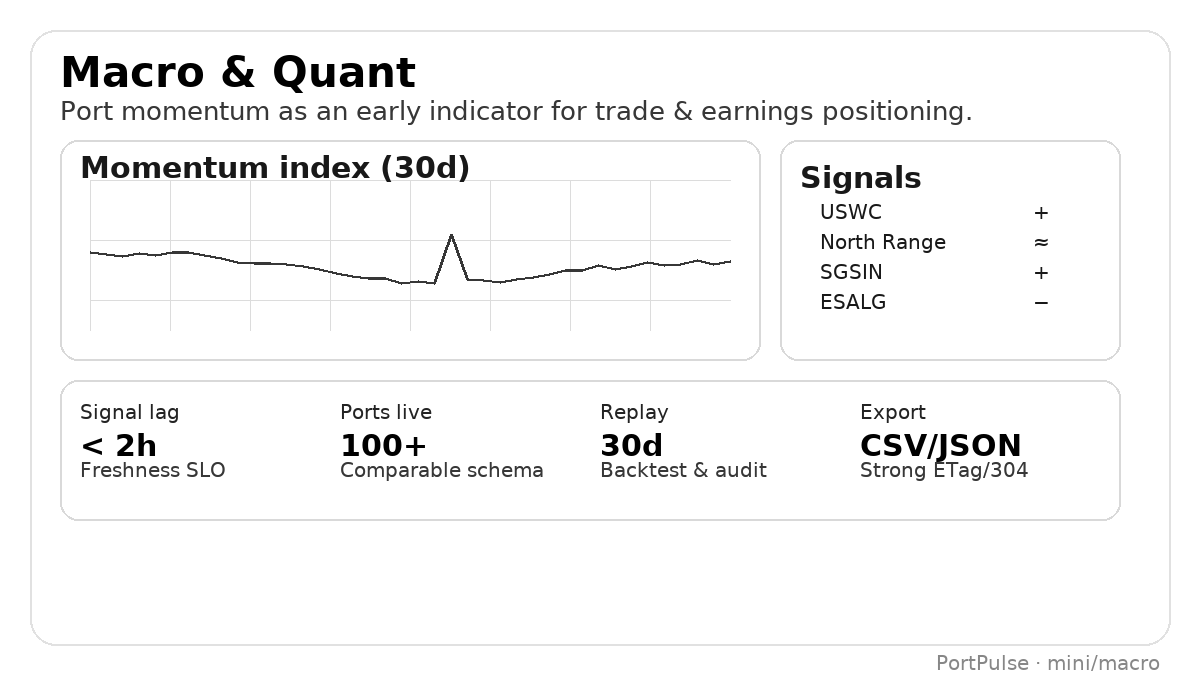

Macro & Quant

Build shipping-based throughput proxies and momentum factors with standardized, auditable series. Use replay windows to backtest; deploy to production with a stable /v1 contract.

Pain

- Heterogeneous sources; inconsistent fields and survivorship bias.

- Latency unclear; snapshots mutate without audit trail.

- CSV vs JSON mismatch breaks pipelines and checks.

How we help

- Unified definitions (e.g., avg_wait_hours, normalized congestion_score).

- Freshness SLO (p95 ≤ 2h),

as_of/last_updated, and request IDs. - CSV/JSON parity with strong ETag/304; 30-day replay window.

Result KPIs

- Faster time-to-signal for nowcasts.

- Higher hit-rate of factors in live.

- Lower maintenance and fewer schema breaks.

Research note — shipping momentum as a proxy

A macro fund needed a robust port congestion momentum factor to improve cyclical exposure timing. DIY feeds suffered from mutation and definitional drift. With PortPulse, the team built a rolling z-score of avg_wait_hours blended with a bounded congestion_score. A 30-day replay window ensured auditability, and CSV parity simplified backtests with reproducible pulls.

curl -H "X-API-Key: DEMO_KEY" \ "https://api.useportpulse.com/v1/ports/SGSIN/trend?days=180&fields=date,avg_wait_hours,congestion_score"

Replay windows + timestamps → stable research & live parity. Use ETags for efficient re-pulls.

Macro/Quant playbook — design → backtest → deploy

Design

- Choose ports that proxy your trade flows or indices.

- Normalize with congestion_score for cross-port comparability.

- Use p95 hours to reduce outlier impact.

Backtest

- Pull replay windows and freeze vintages for audit.

- Blend daily series into weekly factors to match re-hedge cadence.

- Track look-ahead bias by aligning timestamps explicitly.

Deploy

- Guardrails on freshness; alert on SLO breach.

- Cache with ETag/304; fall back to last good snapshot if needed.

- Version factors and store request IDs for reproducibility.

Signal — rolling z-score of wait hours

{

"ports": ["SGSIN","NLRTM","USLAX"],

"lookback": "180d",

"signal": "zscore(avg_wait_hours, window=60)",

"blend": { "congestion_score": 0.3 }

}Event — change-point on congestion

{

"port": "SGSIN",

"series": "congestion_score",

"method": "change_point",

"min_magnitude": 0.15,

"action": { "type": "notify", "channel": "research-slack" }

}Quick demo — simple momentum

Create a bounded momentum signal from last 90 days (0–1), then test correlation with your target series.

curl -s -H "X-API-Key: DEMO_KEY" \ "https://api.useportpulse.com/v1/ports/NLRTM/trend?days=90&fields=date,avg_wait_hours,congestion_score" | jq '.' // momentum = 0.6 * zscore(avg_wait_hours, 45d) + 0.4 * max(congestion_score_last_7d)

API endpoints (v1)

- GET /v1/health

- GET /v1/meta/sources

- GET /v1/ports/{code}/overview

- GET /v1/ports/{code}/trend

- GET /v1/ports/{code}/snapshot

- GET /v1/ports/{code}/dwell

- GET /v1/ports/{code}/alerts

- GET /v1/hs/{code}/imports (beta)

Contract-first: v1 is frozen; breaking changes move to v1beta with a ≥90-day deprecation window.

Comparable by design

Normalized fields across ports; inspectable definitions & methods.

Replay & audit

30-day replay; timestamps & request IDs; CSV parity for research jobs.

Production-ready

ETags, freshness SLOs, and a frozen /v1 contract for stable deployment.

Ship your factor faster

Start a 14-day evaluation (up to 5 ports) or talk to us about coverage packs tailored to your universe.

FAQ

How do you prevent series mutation?

We expose as_of/last_updated and provide a 30-day replay window. Keep vintages to lock research.

Do CSV and JSON match 1:1?

Yes. CSV is a first-class contract with strong ETag/304 for efficient pulls.

Can we get region/sector packs?

Yes. Talk to us about Port Packs and Hi-Confidence add-ons for your scope.